I’m going to say something that might save you a quarter… maybe a whole year.

When results start wobbling, most teams assume it’s a performance problem.

As an agency owner who lives in intent and behavioral data every day, I’ll tell you what it usually is:

It’s a data quality problem wearing a “marketing” costume.

You feel it as higher costs, lower conversion rates, smaller retargeting pools, and campaigns that work for a minute then fade. But the real issue isn’t your creative or your platform. It’s the foundation you’re building on.

The quiet mistake smart teams make

It starts with reasonable-sounding decisions:

“This data provider is cheaper.”

“It looks basically the same.”

“An email is an email.”

That logic is how performance slowly breaks without an obvious smoking gun.

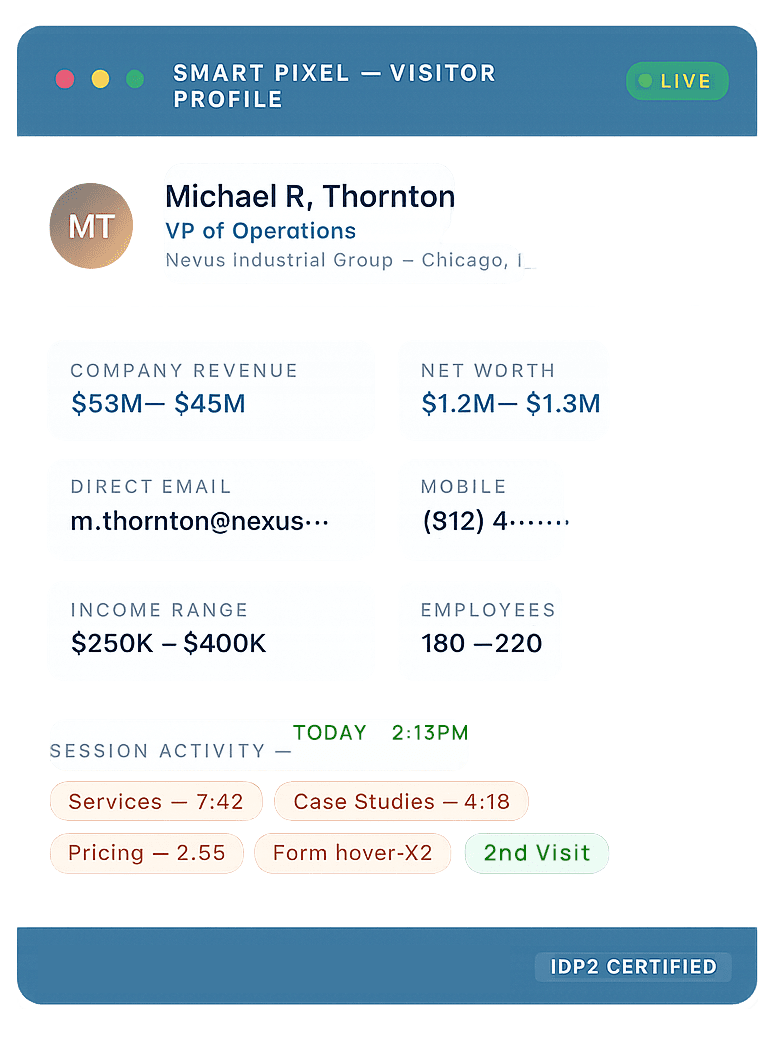

Because modern marketing and growth are built on identity. If you can’t reliably connect signals to real people, everything downstream becomes less predictable.

The part nobody says out loud

Not all data is created the same.

Some companies collect and verify data at the source.

Others resell and recombine data that has already been resold and recombined.

Every time data gets rebuilt from someone else’s dataset, accuracy drops. Think of it like making photocopies. The first copy is fine. The next gets fuzzy. The next loses detail. Eventually you’re working with something that looks usable… until you try to scale with it.

And when accuracy drops, you don’t just lose “data quality.” You lose outcomes:

Match rates fall

Targeting gets broader and more expensive

Retargeting pools shrink

Personalization gets weaker

Reporting becomes harder to trust

Sales teams complain lead quality is “off”

This is why performance feels fragile.

Data decay is real, and it’s constant

Even great data doesn’t stay great on its own.

People change jobs. Emails go dead. Phone numbers change. Companies restructure. Titles shift. Decision makers move. In B2B, this happens faster than most teams want to admit.

So if your dataset started out “pretty good” but hasn’t been continuously verified and refreshed, it doesn’t stay “pretty good.”

It quietly gets worse every month.

And that shows up as:

Campaigns spiking then fading

More time rebuilding audiences

Higher acquisition costs

Lower conversion rates

Less predictable pipeline

It’s not that your marketing team suddenly forgot how to market. It’s that your targeting foundation drifted away from reality.

Why this matters even more in high-ticket B2B

In high-ticket B2B, you don’t need hundreds of extra conversions to feel the difference.

A small number of “right-fit” opportunities can change a quarter. A few missed ones can do the same, just in the wrong direction.

If your data is inaccurate, you’re not just wasting ad dollars. You’re missing conversations with buyers who were already looking.

What I’d look at if I were in your seat

If you’re a CEO, marketing leader, or analytics person, here are the simple questions that cut through the noise:

Where does our data actually come from?

Source-verified, or resold and stitched?How often is it verified and refreshed?

Because “one-time enrichment” isn’t a strategy.Are match rates and retargeting pools staying stable over time?

If they’re shrinking, data quality is a prime suspect.Are we compounding learning, or constantly rebuilding?

If you’re rebuilding often, the foundation is shifting.

The takeaway

When the data underneath your targeting is stable and continuously verified, performance becomes steadier:

Match rates stabilize

Retargeting works longer

Intent signals stay tied to real people

Results become easier to improve and forecast

When the data is a derivative of a derivative and no one is maintaining it, performance becomes a guessing game, no matter how good your creative is.

So if things feel unstable, don’t start with “What’s wrong with our ads?”

Start with:

“How accurate is the data underneath everything we’re doing and how often is it being refreshed?”

That one question has a way of making the real problem show itself.